The promise of machine learning, artificial intelligence, and automation lies in their ability to complete human tasks more efficiently & effectively. Algorithms can be trained to mimic human behavior, but what happens when the human developing the algorithm inadvertently allows bias into the training process? In a rush to achieve corporate savings through automation, algorithm developers need to account for bias in their models, or they run the risk of sacrificing those cost savings to litigation, regulation, and lost sales.

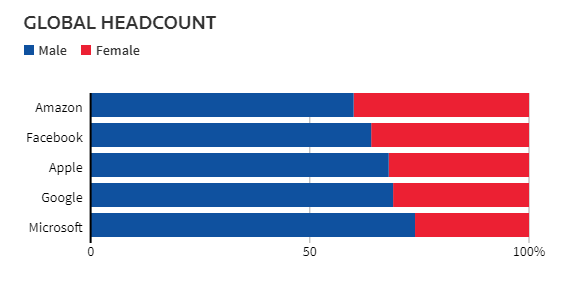

In 2018 Amazon had to scrap an internal recruiting tool because the algorithm was biased against women. Amazon had invested multiple years of time & resources into this tool, but they still managed to allow this gender bias to creep in. The bias is not even particularly surprising given the historical male dominance of the tech industry that was likely captured in their training data. If this sort of mistake can happen to a data science innovator such as Amazon, what are the implications for firms without a depth of analytical talent?

As enterprises increasingly leverage data and algorithms to make critical decisions, they also face the risk of inadvertently deploying algorithms or models that automatically take actions or propose decisions that are not in alignment with organizational values or even violate regulations or expose the organization to legal risks. This is not only true for high-impact public-facing algorithms in traditionally more regulated industries like finance or medicine, but also for novel public-facing algorithms in lightly-regulated industries or even internally-facing algorithms such as those an HR department might consider using.

The Need for Algorithm Auditing Services

Audits are a tried-and-true approach to ensuring compliance with a set of standards that have been applied for many decades in domains like finance. Audits are typically performed with a defined set of compliance standards that the process must achieve. The business implements the process under the standards. After some interval of time, the business calls in an independent party to verify that the compliance standards are being followed. These types of procedures are commonplace in certain systems such as information security and data privacy.

Due to the complexity of algorithms and the relative immaturity of the systems in which they are deployed, we haven’t yet seen the proliferation of quality assurance standards and auditing in machine learning and data science. However, there are louder and more frequent calls for algorithms to be audited rather than naively trusted simply because of their intimidating and supposedly unbiased mathematical foundations, superhuman accuracy, or black box inscrutability. Indeed, academic institutes, professional guilds, and entire conferences are dedicated to understanding issues related to algorithmic fairness, accountability, and transparency and developing corresponding rigorous quantitative techniques.

Even in the absence of enforceable compliance regulations or standards, data scientists and the organizations they support still have an obligation to reduce the risk of harm caused by models and consistently assure model quality. As regulatory compliance standards for machine learning eventually emerge, those companies that have invested in their algorithmic quality assurance will be the most prepared to verify the quality of their algorithms and models.

Audits & MLops Tooling

An algorithm audit process will involve analyses of at least model or algorithm inputs and outputs under various scenarios, but also potential data sets, code used to train models, and trained model code. There are many tools for data scientists to use to help understand how their model works or if it is causing bias; the choice of tool will be dependent on the organization and the capabilities it is trying to develop. While it may be tempting to think that these analyses only need to be conducted once before algorithm deployment, this is not the case.

One-off analyses can be conducted but unfortunately do not scale well with the complexity of production machine learning systems. From retraining the model in new data to further improvements to model performance, models are rarely deployed to operate without intervention. The challenge then shifts to understanding how quality can be assured consistently throughout the machine learning lifecycle. “MLops” is the practice of leveraging software quality assurance practices, such as DevOps, in machine learning systems. Mosaic believes these practices are the key to assuring end-to-end quality in the ML lifecycle.

Mosaic Algorithm Auditing Services

Mosaic can audit models or algorithms to help organizations capture value from data & algorithms while also ensuring that algorithm deployment does not lead organizations out of alignment with relevant regulations or organizational values. As investors and consumers alike turn to enterprises for leadership in global sustainability, businesses need to factor sustainability into everything, including algorithmic development.

When conducting an algorithm audit, Mosaic will review existing or proposed regulations or legislation to identify relevant ethical standards for an algorithm and use case, such as non-discrimination based on race or gender. If desired, Mosaic can also work with various algorithm stakeholders to identify and prioritize additional ethical standards that are particular to an algorithm and its context.

Next, Mosaic will develop analyses and experiments to ensure compliance with the identified standards. If the statistical evidence suggests that an algorithm does not comply with the standards, Mosaic will help identify algorithm adjustments that ensure compliance.

Furthermore, Mosaic can help organizations leverage MLops best practices to ensure that evaluation is performed for every model that is moved to production, preventing problematic models from being released. Post-deployment model monitoring can ensure that issues with models or the data fed into them are identified and handled appropriately without potentially-costly delays. Integrated quality assurance and model monitoring provide peace of mind and empirical evidence that your deployed models or algorithms continue to be in alignment with relevant regulations and your organizational values, while still empowering data scientists to innovate and increase the value that models provide.

Why Mosaic?

We now experience the results of machine learning models on a frequent basis through online interaction with news sites that learn our interests, retail websites that provide automated offers that are customized to our buying habits, and credit card fraud detection that warns us when a transaction occurs that is outside of our normal purchasing pattern. There are a number of risks in deploying these algorithms, and businesses need to be prepared to explain the mechanics and provide evidence that models are meeting relevant standards.

Mosaic is well versed in developing & deploying all flavors of data science for a diverse set of customers over the past 16 years. Acting as neutral third-party, we can quickly understand the inputs, outputs, algorithms, and bias risks, providing you with a plan to protect your business from litigation, regulation, and negative market sentiment. We are going to go where the data tells us and help our customers operate effectively without fear of bias.

Want to learn more about our algorithm auditing services? Please contact us here.